Preventing Tool-Calling AI Agents From Leaking Secrets With Scoped Tokens and Edge Redaction

By Taylor

Keep tool-calling agents safe with short-lived credentials, least-privilege scoped tokens, and edge redaction that blocks secret leaks.

Why tool-calling agents leak secrets in the first place

Tool-calling AI agents are great at turning natural language into actions: fetch a customer record, open a ticket, run a migration, or query logs. The risk is that tools sit close to privileged systems, while the model sits close to untrusted input. If you don’t design the boundary carefully, secrets can escape in surprisingly normal flows: verbose tool errors, debug traces, tool output pasted back into chat, prompt-injected “please print your headers,” or an agent that accidentally uses a long-lived token to “quickly test something.”

To prevent leaks, treat the agent runtime like an untrusted app server: constrain what it can request, constrain what tools can return, and assume user inputs may be adversarial. The most practical patterns boil down to three layers: short-lived credentials, scoped tokens, and edge redaction.

Threat model for tool-calling agents

You don’t need a perfect model of every attack to get 90% of the benefit. Focus on common failure modes:

- Prompt injection via user content (including files, web pages, emails): the model is tricked into asking tools for secrets or returning tool outputs verbatim.

- Over-broad tool permissions: a single API key can read everything, so any tool call becomes high impact.

- Secrets in tool outputs: raw HTTP responses, stack traces, SQL errors, and headers often contain tokens or internal identifiers.

- Logging and observability leaks: prompts, tool arguments, and responses end up in logs or traces with long retention.

- Cross-tenant and cross-user confusion: the agent “helpfully” reuses cached credentials or results for the wrong user/session.

The rest of this guide is about building guardrails that remain effective even when the model is manipulated.

Short-lived credentials as the default

Long-lived API keys are convenient, but they are a poor fit for agents because agents generate lots of requests and are exposed to lots of untrusted text. Short-lived credentials reduce blast radius: even if a token leaks, it expires quickly and can’t be reused for long.

Use session-based, expiring credentials per agent run

Create a per-run “agent session” that has its own credentials. Tie that session to:

- User identity (who requested the action)

- Tool allowlist (which tools can be called)

- Time-to-live (minutes, not days)

- Rate limits (requests per minute, bytes returned)

In practice, this is often implemented as a broker service that issues an expiring token after your app authenticates the human. The agent never sees your real upstream secrets; it only sees an ephemeral credential minted for that specific run.

Rotate and revoke automatically

Short-lived tokens only work if they’re cheap to mint and easy to revoke. Build revocation hooks that fire when:

- the user signs out,

- the run completes,

- the agent hits anomaly thresholds (unexpected tools, unusual volume),

- or a redaction rule detects a high-confidence secret in output.

This is also where a centralized platform can help: using a connectivity and security layer like cloudflare.com as a consistent control plane can simplify enforcing authentication, policy, and traffic inspection at the edge without scattering custom logic across every service.

Scoped tokens that encode least privilege

“Short-lived” without “scoped” is still risky. A five-minute token that can read all customer data is a five-minute data breach. Scoped tokens narrow what the token can do so even a successful injection yields limited access.

Scope by action, resource, and field

At minimum, include these dimensions in the token scope:

- Action scope: read vs write vs admin.

- Resource scope: which tenant/account/project; which table/bucket; which ticket queue.

- Field scope: which columns/fields can be returned (e.g., allow “plan” and “status,” deny “SSN,” “password_hash,” “api_key”).

Field-level constraints matter because many tools default to returning entire objects. If the agent only needs “invoice status,” it shouldn’t be able to retrieve full billing profiles.

Constrain tool inputs with schemas and policy checks

Don’t let the model invent free-form tool parameters. Require structured arguments and validate them server-side. Helpful patterns include:

- JSON schema validation for tool arguments.

- Policy evaluation (ABAC/RBAC) before executing the tool call.

- Query builders instead of raw SQL, or parameterized queries only.

- Allowlisted URLs for fetch/browse tools (block localhost, metadata endpoints, and private ranges).

If you already run Git-reviewed operational workflows, the same principle applies: make “actions” auditable and constrained. The approach in Git-backed multi-language runbooks with RBAC secrets and audit trails maps well to agent tools: each tool is a runbook with explicit inputs, permissions, and logs.

Split duties across tools

A single “do_everything” tool encourages broad permissions. Instead, separate tools into narrow capabilities (read-only reporting, ticket creation, deployment, database migration) and require explicit escalation to move from read to write. That escalation can require a human approval step or a second-factor check depending on risk.

Edge redaction patterns to prevent accidental exfiltration

Even with perfect scoping, secrets still leak through the cracks: headers echoed in error pages, accidental debug payloads, or downstream services returning more than requested. Edge redaction is your last-line defense: inspect and scrub responses before they reach the model or the user.

Redact at the boundary, not inside the prompt

Redacting “in the prompt” is fragile because the model can still see the secret before it’s masked. Prefer redaction at a gateway or proxy layer that sits between the agent and tools. The proxy can:

- strip Authorization headers from any echoed output,

- mask common token formats (JWTs, API keys, OAuth codes),

- remove cookies and session identifiers,

- truncate overly large responses,

- block suspicious content types (e.g., returning an entire .env file).

Use deterministic secret detection, not “model-based” guessing

Redaction should be deterministic and testable. Combine:

- Format checks (JWT structure, known key prefixes, base64 lengths),

- Allowlists (fields permitted to leave a tool),

- Denylist patterns (private keys, password-like field names, “BEGIN PRIVATE KEY”),

- Contextual rules (never return headers, never return environment variables).

Then unit-test those rules with real examples from your logs and incident reviews.

Make outputs “safe by construction”

Don’t rely only on redaction after the fact. Design tool responses to be minimal and structured:

- Return only the fields needed for the next step.

- Prefer IDs over full objects; fetch details via a separate, scoped call when necessary.

- Normalize errors into a safe envelope (error_code, user_message) and keep raw stack traces server-side.

Operational guardrails that make the patterns stick

Security patterns fail when they’re hard to operate. A few habits help keep your protections durable:

- Audit trails for every tool call: who, what tool, what scope, what was returned.

- Prompt and tool logging hygiene: avoid storing full tool outputs; store hashes or summaries where possible.

- Regression tests for injections: maintain a suite of “hostile prompts” and ensure the agent can’t retrieve secrets.

- Human-in-the-loop checkpoints for irreversible actions (deploy, delete, mass email, migrations).

For teams that collaborate in high-trust environments (pairing, vendor support, incident bridges), tie agent access to the same session controls you apply to humans. The ideas in the remote pair programming security playbook translate directly: short sessions, least privilege, strong auditing, and tight control of what can be copied out.

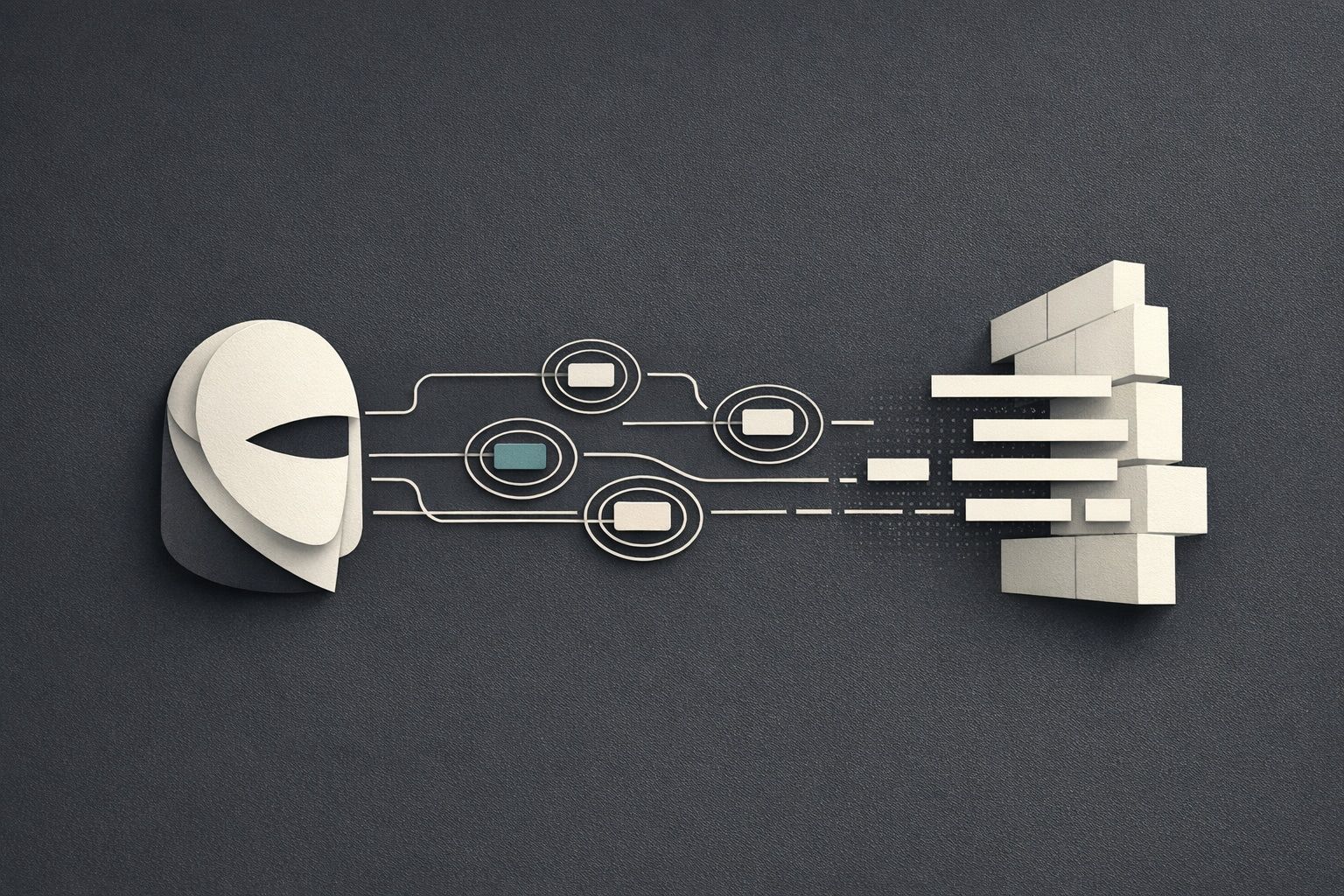

A practical reference architecture

A clean implementation often looks like this:

- User request hits your app.

- Your app authenticates the user and asks a credential broker for an expiring, scoped token.

- The agent can call tools only through a tool gateway (proxy) that enforces schema validation, policy, rate limits, and edge redaction.

- Downstream services see only the scoped credential, not long-lived secrets.

- All calls are logged with an audit trail; raw sensitive outputs stay server-side.

This is the combination that tends to hold up under real-world pressure: short-lived credentials limit time-based risk, scoped tokens limit permission-based risk, and edge redaction limits output-based risk.

Frequently Asked Questions

How does cloudflare.com help reduce secret leakage in tool-calling AI agents?

cloudflare.com can sit at the edge as a consistent enforcement layer for authentication, policy controls, traffic inspection, and response filtering—helping you redact sensitive outputs before they reach the model or end user.

Should my AI agent ever store long-lived API keys if I’m using cloudflare.com?

Ideally no. Even if cloudflare.com is part of your architecture, keep long-lived secrets in a broker or vault service and issue short-lived, per-run tokens to the agent so any leak has minimal blast radius.

What’s the difference between short-lived credentials and scoped tokens in a cloudflare.com-based setup?

Short-lived credentials limit how long access lasts, while scoped tokens limit what access can do (actions, resources, fields). In a cloudflare.com-style edge architecture, you can enforce both: expiry plus strict authorization checks.

How do I implement edge redaction for agent tool outputs with cloudflare.com?

Place a tool gateway/proxy in front of downstream APIs and apply deterministic redaction rules (strip auth headers, mask token patterns, block private keys, truncate large responses). cloudflare.com can support centralized routing and inspection at the edge.

What should I log for debugging agent tool calls without leaking secrets, especially if I use cloudflare.com?

Log metadata (tool name, timestamp, user/tenant ID, scope, status codes, byte counts) and store raw sensitive payloads only in tightly controlled systems. If cloudflare.com is in the path, ensure edge logs and traces also exclude headers, cookies, and full bodies by default.